Progressive Delivery is the next step after Continuous Delivery, where new versions are deployed to a subset of users and are evaluated in terms of correctness and performance before rolling them to the totality of the users and rolled back if not matching some key metrics.

Progressive Delivery is the next step after Continuous Delivery, where new versions are deployed to a subset of users and are evaluated in terms of correctness and performance before rolling them to the totality of the users and rolled back if not matching some key metrics.

There are some interesting projects that make this easier in Kubernetes, and I’m going to talk about three of them that I took for a spin with a Jenkins X example project: Shipper, Istio and Flagger.

Shipper

Shipper is a project from booking.com extending Kubernetes to add sophisticated rollout strategies and multi-cluster orchestration (docs). It supports deployments from one to multiple clusters, and allows multi-region deployments.

Shipper is installed with a cli shipperctl, that pushes the configuration of the different clusters to manage. Note this issue with GKE contexts.

Shipper uses Helm packages for deployment but they are not installed with Helm, they won’t show in helm list. Also, deployments must be version apps/v1 or shipper will not edit the deployment to add the right labels and replica count.

Rollouts with Shipper are all about transitioning from an old Release, the incumbent, to a new Release, the contender. This is achieved by creating a new Application object that defines the n stages that the deployment goes through. For example for a 3 step process:

- Staging: Deploy the new version to one pod, with no traffic

- 50/50: Deploy the new version to 50% of the pods and 50% of the traffic

- Full on: Deploy the new version to all the pods and all the traffic

strategy:

steps:

- name: staging

capacity:

contender: 1

incumbent: 100

traffic:

contender: 0

incumbent: 100

- name: 50/50

capacity:

contender: 50

incumbent: 50

traffic:

contender: 50

incumbent: 50

- name: full on

capacity:

contender: 100

incumbent: 0

traffic:

contender: 100

incumbent: 0

If a step in the release does not send traffic to the pods they can be accessed with kubectl port-forward, ie. kubectl port-forward mypod 8080:8080, which is useful for testing before users can see the new version.

Shipper supports the concept of multiple clusters, but treats all clusters the same way, only using regions and filter by capabilities (set in the cluster object), so there’s no option to have dev, staging, prod clusters with just one Application object. But we could have two application objects

- myapp-staging deploys to region “staging”

- myapp deploys to other regions

In GKE you can easily configure a multi cluster ingress that will expose the service running in multiple clusters and serve from the cluster closest to your location.

Limitations

The main limitations in Shipper:

- Chart restrictions: The Chart must have exactly one Deployment object. The name of the Deployment should be templated with {{.Release.Name}}. The Deployment object should have apiVersion: apps/v1.

- Pod-based traffic shifting: there is no way to have fine grained traffic routing, ie. send 1% of the traffic to the new version, it is based on the number of pods running.

- New Pods don’t get traffic if Shipper is not working

Istio

Istio is not a deployment tool but a service mesh. However it is interesting as it has become very popular and allows traffic management, for example sending a percentage of the traffic to a different service and other advanced networking.

In GKE it can be installed by just checking the box to enable Istio in the cluster configuration. In other clusters it can be installed manually or with Helm.

With Istio we can create a Gateway that processes all external traffic through the Ingress Gateway and create VirtualServices that manage the routing to our services. In order to do that just find the ingress gateway ip address and configure a wildcard DNS for it. Then create the Gateway that will route all external traffic through the Ingress Gateway

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: public-gateway

namespace: istio-system

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

Istio does not manage the app lifecycle just the networking. We can create a Virtual Service to send 1% of the traffic to the service deployed in a pull request or in the master branch, for all requests coming to the Ingress Gateway.

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: croc-hunter-jenkinsx

namespace: jx-production

spec:

gateways:

- public-gateway.istio-system.svc.cluster.local

- mesh

hosts:

- croc-hunter.istio.example.org

http:

- route:

- destination:

host: croc-hunter-jenkinsx.jx-production.svc.cluster.local

port:

number: 80

weight: 99

- destination:

host: croc-hunter-jenkinsx.jx-staging.svc.cluster.local

port:

number: 80

weight: 1

Flagger

Flagger is a project sponsored by WeaveWorks using Istio to automate canarying and rollbacks using metrics from Prometheus. It goes beyond what Istio provides to automate progressive rollouts and rollbacks based on metrics.

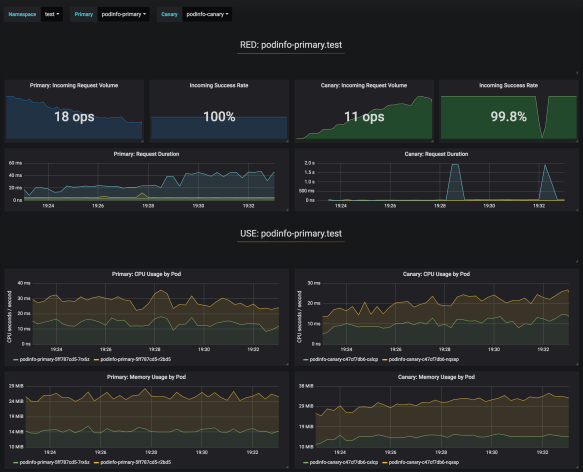

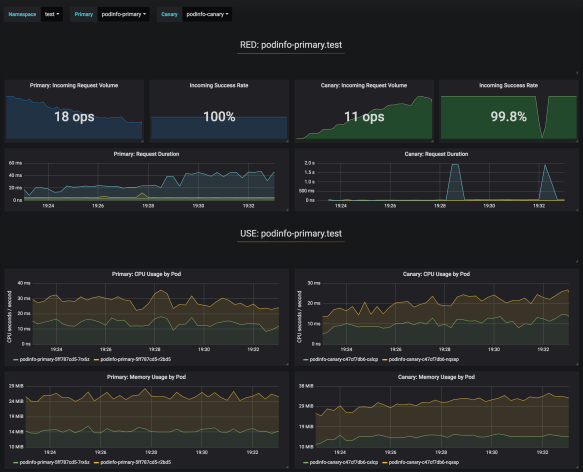

Flagger requires Istio installed with Prometheus, Servicegraph and configuration of some systems, plus the installation of the Flagger controller itself. It also offers a Grafana dashboard to monitor the deployment progress.

The deployment rollout is defined by a Canary object that will generate primary and canary Deployment objects. When the Deployment is edited, for instance to use a new image version, the Flagger controller will shift the loads from 0% to 50% with 10% increases every minute, then it will shift to the new deployment or rollback if metrics such as response errors and request duration fail.

Comparison

This table summarizes the strengths and weaknesses of both Shipper and Flagger in terms of a few Progressive Delivery features.

|

Shipper |

Flagger |

| Traffic routing |

Bare k8s balancing as % of pods |

Advanced traffic routing with Istio (% of requests) |

| Deployment progress UI |

No |

Grafana Dashboard |

| Deployments supported |

Helm charts with strong limitations |

Any deployment |

| Multi cluster deployment |

Yes |

No |

| Canary or blue/green in different namespace (ie. jx-staging and jx-production) |

No |

No, but the VirtualService could be manually edited to do it |

| Canary or blue/green in different cluster |

Yes, but with a hack, using a new Application and link to a new “region” |

Maybe with Istio multicluster ? |

| Automated rollout |

No, operator must manually go through the steps |

Yes, 10% traffic increase every minute, configurable |

| Automated rollback |

No, operator must detect error and manually go through the steps |

Yes, based on Prometheus metrics |

| Requirements |

None |

Istio, Prometheus |

| Alerts |

|

Slack |

To sum up, I see Shipper’s value on multi-cluster management and simplicity, not requiring anything other than Kubernetes, but it comes with some serious limitations.

Flagger really goes the extra mile automating the rollout and rollback, and fine grain control over traffic, at a higher complexity cost with all the extra services needed (Istio, Prometheus).

Find the example code for Shipper, Istio and Flagger.

The Jenkinsfile-Runner-Lambda project is a AWS Lambda function to run Jenkins pipelines. It will process a GitHub webhook, git clone the repository and execute the Jenkinsfile in that git repository. It allows huge scalability with 1000+ concurrent builds and pay per use with zero cost if not used.

The Jenkinsfile-Runner-Lambda project is a AWS Lambda function to run Jenkins pipelines. It will process a GitHub webhook, git clone the repository and execute the Jenkinsfile in that git repository. It allows huge scalability with 1000+ concurrent builds and pay per use with zero cost if not used.